The Philosophy of the Component

In the engineering of complex systems, we often find that the most profound truths are hidden not in the addition of features, but in their systematic removal. This is the essence of the ablation study—a rigorous, clinical dissection of a model’s soul to understand which organs are vital and which are vestigial. As we scale Text-to-Image (T2I) architectures, we are no longer just training pixels; we are mapping the latent geometry of human concepts.

To build for the next decade of generative media, we must look closely at the empirical evidence provided by recent ablation benchmarks. The lessons learned here dictate the scalability, efficiency, and philosophical grounding of our future frameworks.

1. The Primacy of Data Topology: Beyond Raw Volume

For years, the industry mantra was ‘more is better.’ Ablation studies on models like Stable Diffusion 3 and DALL-E 3 have corrected this course. We now know that data quality and caption fidelity outweigh raw dataset size by orders of magnitude.

- The Synthetic Feedback Loop: Ablations show that re-captioning legacy datasets with high-descriptive synthetic text (using vision-language models) drastically improves prompt adherence. It turns out the bottleneck wasn’t the image variety, but the linguistic noise in the original alt-text.

- Aspect Ratio Distribution: The move from center-cropping to ‘bucketing’ (training on variable aspect ratios) wasn’t just a UX improvement; it was a structural necessity. Ablations reveal that cropping destroys the spatial logic of the model, leading to ‘severed’ subjects. Scalability requires respecting the original composition of the world.

2. Architectural Convergence: From UNet to Diffusion Transformers (DiT)

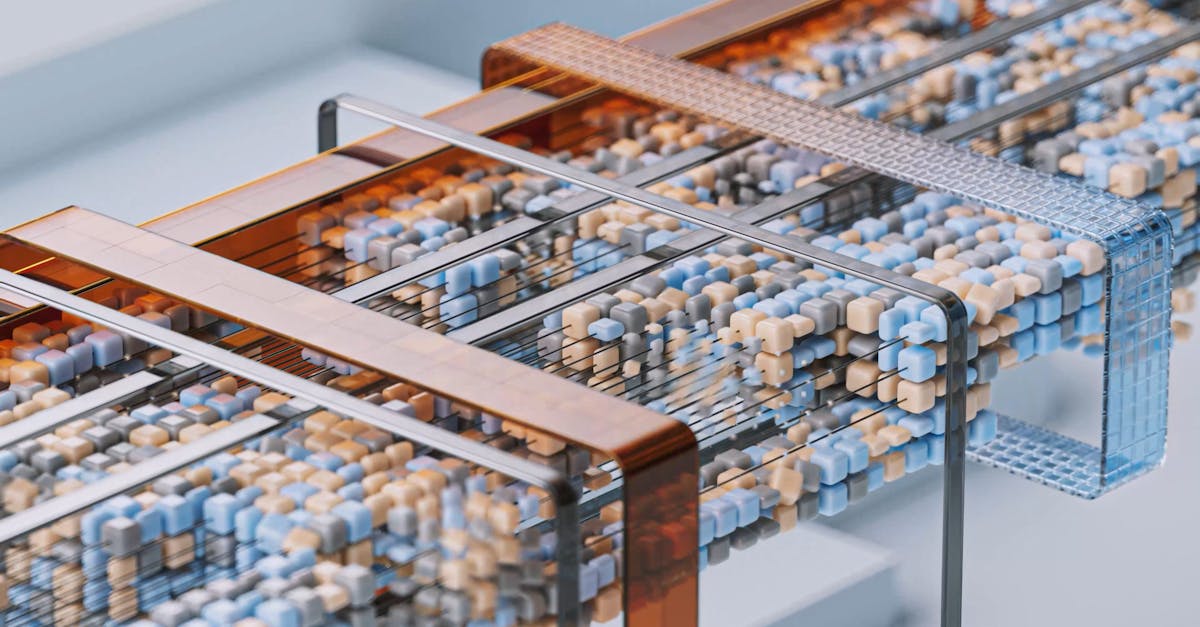

We are witnessing a paradigm shift in the underlying backbone of T2I models. The transition from the traditional convolutional UNet to the Diffusion Transformer (DiT) is a testament to the pursuit of predictable scaling laws.

Analytical comparisons demonstrate that while UNets are computationally efficient at lower resolutions, Transformers offer a superior inductive bias for global context. In the context of a Senior Architect, this is a move toward modular scalability. By treating image patches as tokens, we align image generation with the scaling successes of Large Language Models, allowing us to leverage the same optimization primitives across modalities.

3. The Latent Space Bottleneck: VAE Dynamics

The Variational Autoencoder (VAE) is the silent gatekeeper of image quality. Ablation studies focused on the ‘latent squeeze’ show that if the compression factor is too high, no amount of diffusion training can recover the lost textures.

However, the lesson for architects is one of balance. A larger latent space increases the computational cost of the diffusion process quadratically. The sweet spot—often an 8x or 16x downsampling—is where the philosophy of ‘sufficient representation’ meets the reality of ‘hardware constraints.’ We are designing a lossy bridge between the infinite continuous world and the finite discrete computer.

4. Noise Schedules and the Geometry of Time

Perhaps the most abstract lesson comes from the study of noise schedules. The path from pure Gaussian noise to a coherent image is not linear. Ablations on ‘Zero-Terminal SNR’ (Signal-to-Noise Ratio) have shown that traditional training schedules never actually reached ‘pure’ noise, leading to models that struggled to generate very dark or very bright images.

By correcting the noise schedule, we ensure the model understands the full dynamic range of the visual spectrum. This is a reminder that in architecture, the boundary conditions—the start and end of a process—define the stability of the entire system.

The Vision Forward

The trajectory is clear: we are moving away from monolithic, ‘black-box’ training toward a modular, evidence-based construction of latent space. The lessons from ablations tell us that the future of T2I is not just about more GPUs; it is about the precision of our data pipelines, the scalability of our transformer backbones, and a deeper respect for the mathematical representation of visual reality.

As architects, our role is to ensure that these systems do not just generate images, but understand the underlying structure of the concepts they are tasked to manifest.