Custom Kernels for All: A Fast Track to Unmaintainable Garbage

The latest industry “breakthrough” is here: Claude and Codex can now write custom GPU kernels for the masses. Finally, the “democratization” of low-level systems programming. If you listen to the hype, we’re all just one prompt away from outperforming cuBLAS and hand-tuned assembly.

As an engineer who has spent more time debugging race conditions than I care to admit, this isn’t progress. It’s a looming disaster for anyone who cares about ROI or system stability.

The “Hello World” Trap

It’s easy to get an LLM to spit out a basic matrix multiplication kernel in Triton or CUDA. It looks clean. It compiles. It might even pass a unit test with a 32×32 matrix.

But here is the reality check: writing a kernel is 5% of the job. The other 95% is understanding occupancy, register pressure, memory coalescing, and the specific architecture of the hardware you’re actually running on. LLMs are great at averaging out the internet’s boilerplate. They are remarkably bad at understanding the subtle hardware quirks that differentiate a “custom kernel” from “code that is actually slower than the stock library.”

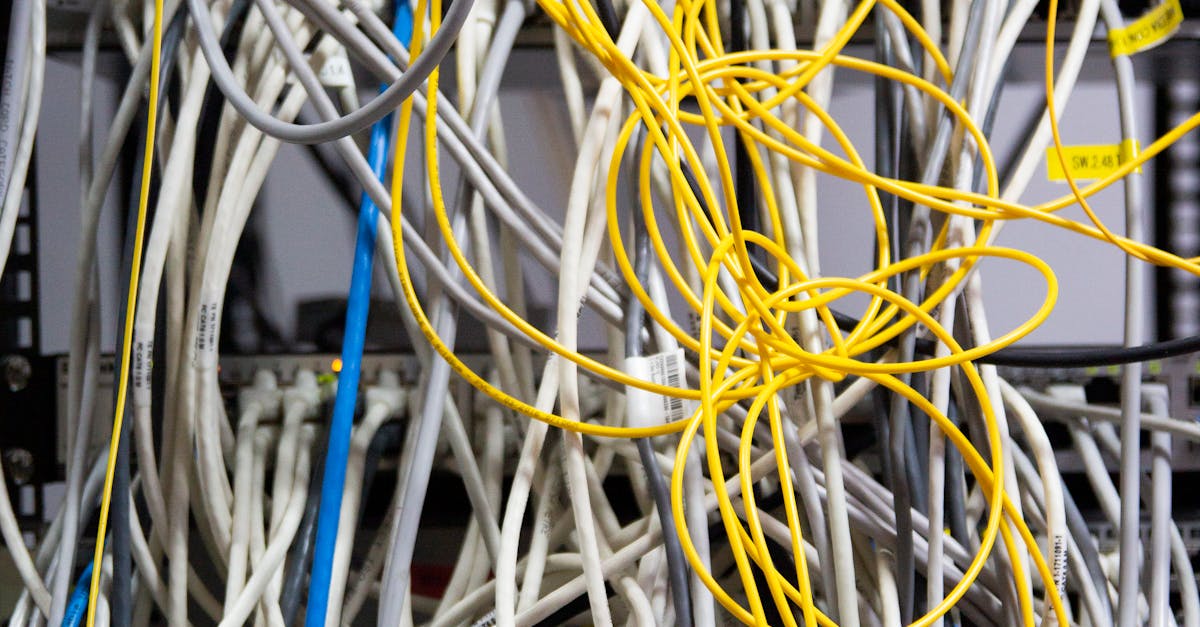

The Maintenance Nightmare

Who maintains the code Claude wrote? When the next generation of hardware drops and the memory hierarchy changes, who is going to refactor that “optimized” kernel?

We are trading a few hours of development time for a lifetime of technical debt. If you didn’t have the expertise to write the kernel yourself, you certainly don’t have the expertise to debug it when it produces a non-deterministic NaN every 10,000 iterations. You’ve essentially outsourced your core logic to a black box that doesn’t understand the physics of the chip it’s targeting.

ROI or Just Noise?

The industry is currently obsessed with “custom.” But unless you are operating at the scale of a hyperscaler, the ROI on a custom kernel is usually negative. Standard libraries—the ones optimized by teams of people who actually understand silicon—are almost always the better choice.

Generating a custom kernel via an LLM is the ultimate “premature optimization.” You’re adding layers of complexity to your stack for a marginal gain—if any—while introducing a massive liability into your most critical execution path.

The Verdict

If you need a custom kernel, hire a systems engineer who understands cache lines and warp schedulers. If you don’t need one, stick to the libraries. Letting a chatbot write your low-level code isn’t “democratization”; it’s just delegating your future outages to a probabilistic text generator. Stop chasing the hype and go back to writing code you can actually support.