The recent shuttering of Clarkesworld’s submission portal wasn’t merely a literary crisis; it was a systemic failure of traditional validation layers. When a prestigious institution is forced to halt operations because the cost of content production has plummeted to zero, we are witnessing a Distributed Denial of Service (DDoS) attack on human discernment.

From a senior architectural perspective, the problem isn’t the AI itself, but the collapse of the friction-based gatekeeping that has historically stabilized our information ecosystems.

The Scalability of Intent

Historically, the barrier to entry for any creative or professional institution was the ‘proof of work’ inherent in the human creative process. Writing a short story requires time, cognitive load, and iterative refinement. These constraints acted as a natural filter, ensuring that the volume of data remained within the processing capacity of human editors.

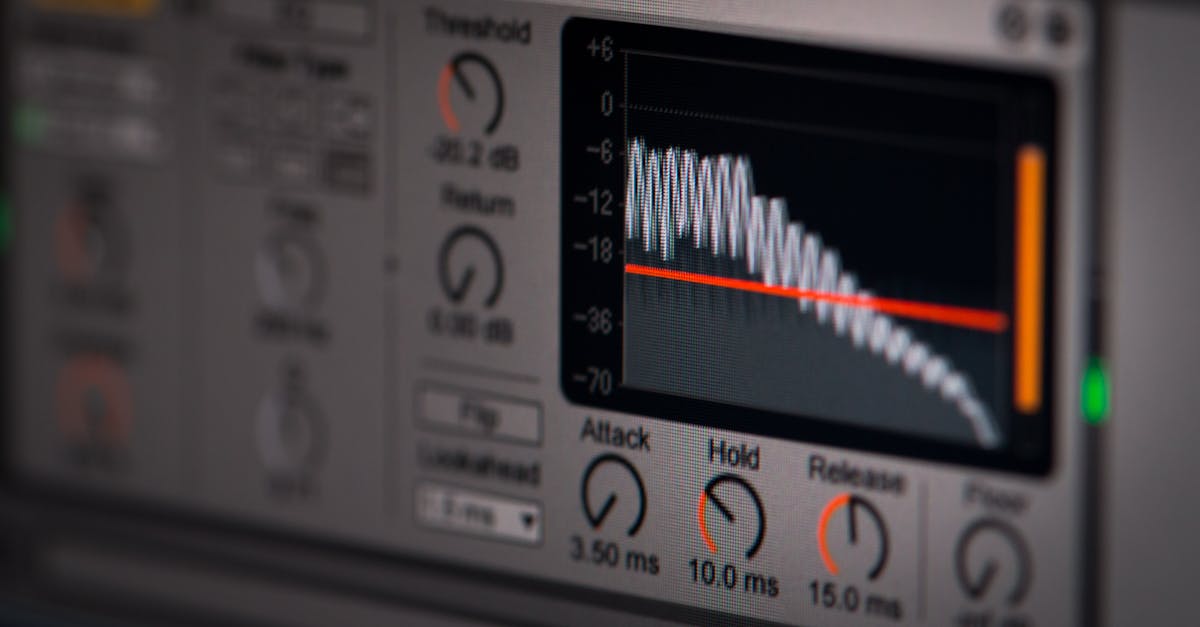

When submitters began feeding detailed guidelines into Large Language Models (LLMs), they bypassed this physical and cognitive latency. They effectively automated the ‘request’ phase of the system without any corresponding increase in the ‘processing’ capacity of the editors. This is a classic architectural mismatch: a high-throughput input stream hitting a legacy, low-throughput processing node.

The Arms Race Fallacy

The current obsession with AI detectors is a flawed structural patch. In any adversarial network, the generator eventually outpaces the discriminator. Attempting to solve generative entropy with probabilistic detection is a losing strategy because the detector, by definition, is always one step behind the latest model weights.

As architects, we know that you cannot secure a system by merely reacting to the symptoms of a breach. You must address the underlying protocol. The ‘arms race’ is a distraction from the fundamental reality: we are entering an era where text, by itself, no longer carries the metadata of its own authenticity.

Toward a New Architecture of Trust

If we cannot detect the machine, we must instead verify the human. We are moving toward a philosophical shift in how we structure digital interactions. The future of institutional integrity will likely depend on three architectural pillars:

1. Deterministic Provenance: Moving away from analyzing the output and toward verifying the process. This involves cryptographic logging of the creative journey—version history, time-stamped drafts, and verified identity layers.

2. Proof of Stake/Effort: Re-introducing friction into the system. This could take the form of micropayments for submissions or tiered reputation systems that require a history of verified human interaction.

3. The Signal-to-Noise Filter: Instead of fighting the flood, institutions must build more robust, AI-augmented triage systems that don’t just ‘detect’ AI, but prioritize ‘intent’ and ‘originality’ through deeper semantic analysis.

The Philosophical Debt

We have spent decades building systems optimized for the frictionless flow of information. Now, we are paying the ‘philosophical debt’ of that design. Friction, it turns out, was a feature, not a bug. It was the anchor of our social and professional trust.

As we redesign these systems, we must look beyond the immediate noise of the AI explosion. The goal isn’t to win an unwinnable arms race; it is to build a new architecture that values the scarcity of human perspective in an ocean of automated output.